Data Formats

On This Page

Data Formats¶

The CS system supports the following data formats:

16-bit floating-point formats:

IEEE half-precision (binary16), also known as FP16.

CB16, a Cerebras 16-bit format with 6 exponent bits.

32-bit floating-point format:

IEEE single-precision (binary32), also known as FP32.

The 16-bit arithmetic uses 16-bit words and is always aligned to a 16-bit boundary.

The single-precision arithmetic uses even-aligned register pairs for register operands and 32-bit aligned addresses for memory operands.

Note

Memory is 16-bit word addressable. It is not byte addressable.

FP16¶

The FP16 implementation follows the IEEE standard for binary16 (half-precision), with 5-bit exponent and a 10-bit explicit mantissa.

Sign: 1 |

Exponent: 5 |

Mantissa: 10 |

CB16 Half-Precision¶

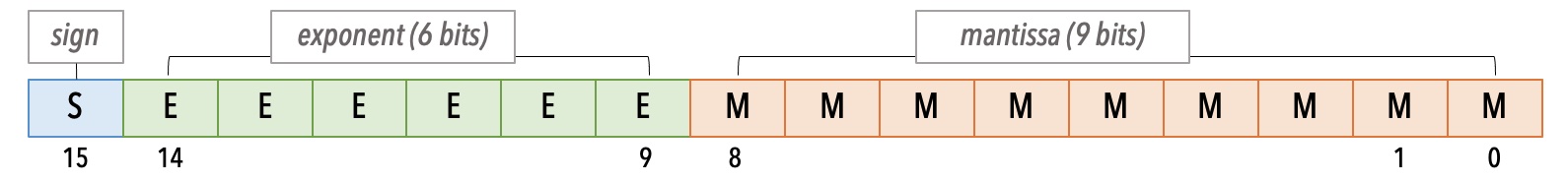

The CB16 is Cerebras’ 16-bit format, also referred to as cbfloat16. The CB16 is a floating-point format with 6-bit exponent and 9-bit explicit mantissa. This allows for double the dynamic range of FP16.

Fig. 2 Cerebras CB16 Format¶

With 1 bit more for the exponent compared to FP16, the CB16 provides a bigger range with the following benefits:

Denormals are far less frequent.

Dynamic loss scaling is not necessary on many networks.

Note

The cbfloat16 data format is different from the bfloat16 Brain Floating Point format.

Using CB16¶

In your code, make sure the following two conditions are satisfied:

In the parameters YAML file, in the

csconfigsection, set the keyuse_cbfloat16toTrue.csconfig: ... use_cbfloat16: True

In your code, while constructing the

CSRunConfigobject, read the key-value pair foruse_cbfloat16from thecsconfiglist in the YAML file. See the following:... # get cs-specific configs cs_config = get_csconfig(params.get("csconfig", dict())) ... est_config = CSRunConfig( cs_ip=runconfig_params["cs_ip"], cs_config=cs_config, stack_params=stack_params, **csrunconfig_dict, ) warm_start_settings = create_warm_start_settings( runconfig_params, exclude_string=output_layer_name ) est = CerebrasEstimator( model_fn=model_fn, model_dir=runconfig_params["model_dir"], config=est_config, params=params, warm_start_from=warm_start_settings, )

Important

Ensure that the above both (1) and (2) conditions are true.

FP32 Single-Precision¶

The FP32 is equivalent to IEEE binary32 (single-precision), with 8-bit exponent and 23-bit explicit mantissa.

Sign: 1 |

Exponent: 8 |

Mantissa: 23 |

Mixed-Precision Training¶

The CS system currently supports only mixed-precision for the training. Ensure that in your models you have:

16-bit input to arithmetic operations, and

FP32 accumulations.

Mixed-precision, when used in combination with Dynamic Loss Scaling, can result in speedups in training.

See the example in Step 5: Ensure mixed precision.

Automatic Mixed Precision¶

This section contains an explanation of bfloat16-dtype that is enabled in PyTorch for GPT-2, GPT-3, GPT-J, and GPT-neox models as part of the automatic mixed precision mode.

Automatic mixed precision is a mode that allows training deep learning models with a mix of single precision floating point float32 and half precision floating points such as float16 or bfloat16.

The benefits of the mixed precision mode are primary lying in performance. It is an optimization technique that allows you to train your networks faster, but without loss in quality. This phenomenon is due to the fact that some layers of the neural networks can be executed without high precision level, such as convolutional or linear layers. They’ve proven to be much faster when executed with float16 or bfloat16. However, other operations, such as reductions, often require a higher precision level in order to maintain the same quality results.

This trade-off of what needs to be casted to half dtype and what should be maintained in a single precision is included in the recipe of “automatic mixed precision algorithm“. In a nutshell, this recipe measures the performance of the network in default precision, then walks through adding castings to run the same network with a mixed precision setting to optimize performance without hurting accuracy.

Mixed precision does not require you to specify bfloat16 as a half precision floating point; however, it has shown some benefits over applying float16. Below we are going to discuss bfloat16 in more granular details.

bfloat16 Floating Type¶

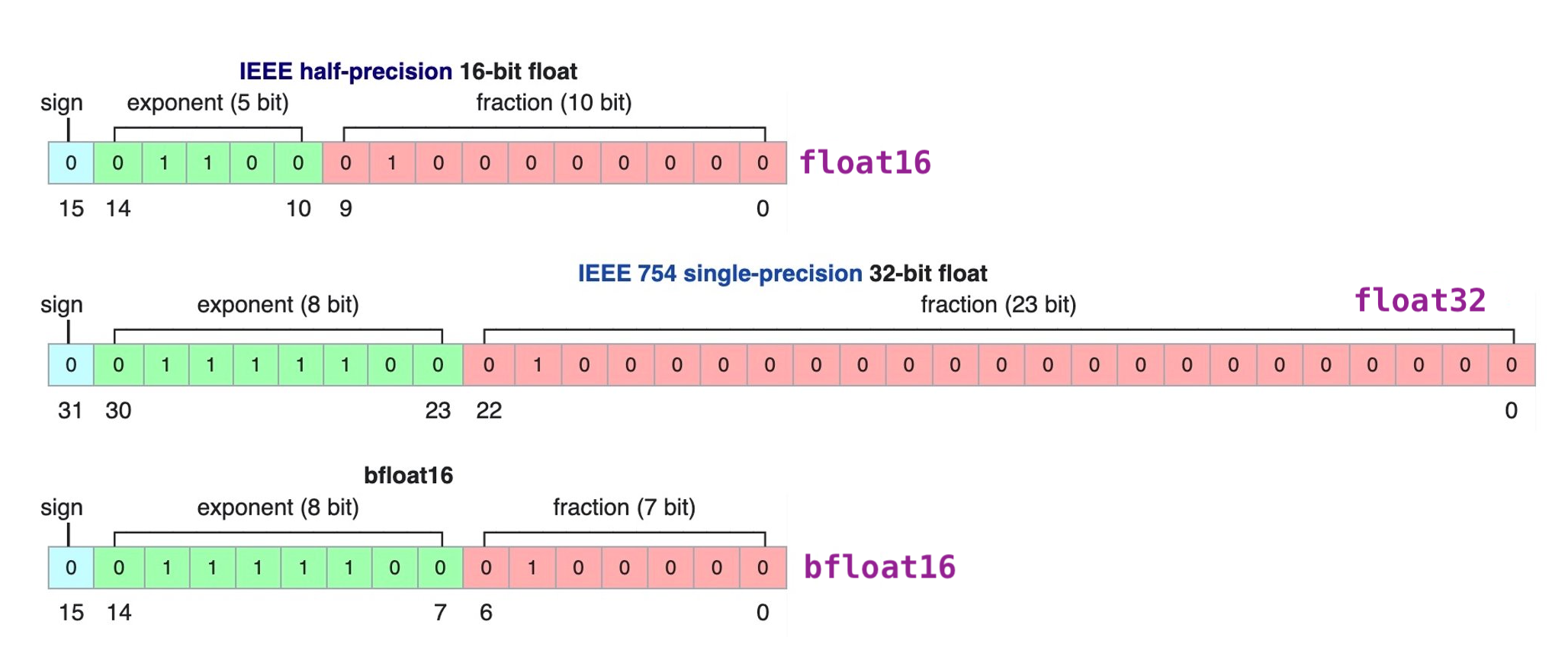

bfloat16 is a custom 16-bit floating point format for deep learning that’s comprised of one sign bit, eight exponent bits, and seven mantissa bits. This is different from the industry-standard IEEE 16-bit floating point, which was not designed with deep learning applications in mind. The figure below demonstrates the internals of three floating point formats: (a) float16: IEEE half-precision, (b) float32: IEEE single-precision, and (c) bfloat16.

We can see that bfloat16 has a greater dynamic range (number of exponent bits) than float16, which is identical to float32.

Experiments: Automatic Mixed Precision and bfloat16¶

We experimented with a large amount of deep learning networks, and comparing between bfloat16 and float16 modes, we can see that bfloat16 is 18% faster, significantly less pruned to the weight grow and shows better eval scores.

Benefits of bfloat16 vs. float16/32¶

Our experiments demonstrated that choosing bfloat16 is beneficial over pure float32 or a mixed version with float16. It improves efficiency of the training, saves space while maintaining the same accuracy level. This happens due to the fact that deep learning models in general are more sensitive to changes in exponent rather than mantissa.

Training behavior with the bfloat16 setting is more robust and is less pruned to having various underflows, overflows, or any other numerical instability during training similarly to training with pure float32 dtype. This is happening because exponent size of bfloat16 floating point is the same as float32.

How to Enable bfloat16¶

To enable bfloat16 in the mixed precision mode, allow the next changes in the config file:

model.use_bfloat16: True optimizer.loss_scaling_factor: 1.0 model.mixed_precision: True

As you can see in addition to changes specific to mixed precision and bfloat16 parameter, we need to disable loss scaling. As we described above, bfloat16 has the same exponent size as float32, thus it will have identical behavioral for underflows, overflows, or any other numeric instability during training. Originally, loss scaling factor was introduced for the mixed precision mode with float16 setting. It was necessary to scale the loss to avoid these side effects. bfloat16 does not require loss scaling, thus comes close to being a drop-in replacement for float32 when training and running deep neural networks.

To try out some of our networks with this setting, refer to gpt2, gpt3, and gptj references.