Cluster monitoring with Grafana#

Overview#

Grafana dashboards empower you to visualize, query, and explore vital metrics through dynamic graphs and charts. Dive deeper with targeted queries, uncover hidden patterns through interactive exploration, and gain rich context from integrated access to system logs and traces.

Cerebras offers you two Cerebras-tailored Grafana Dashboards:

ML Admin dashboard

This dashboard is designed to help users and administrators visualize the overall state of the Cerebras Wafer-Scale cluster

ML User dashboard

This dashboard is specialized for monitoring and managing individual jobs running on the Cerebras Wafer-Scale cluster

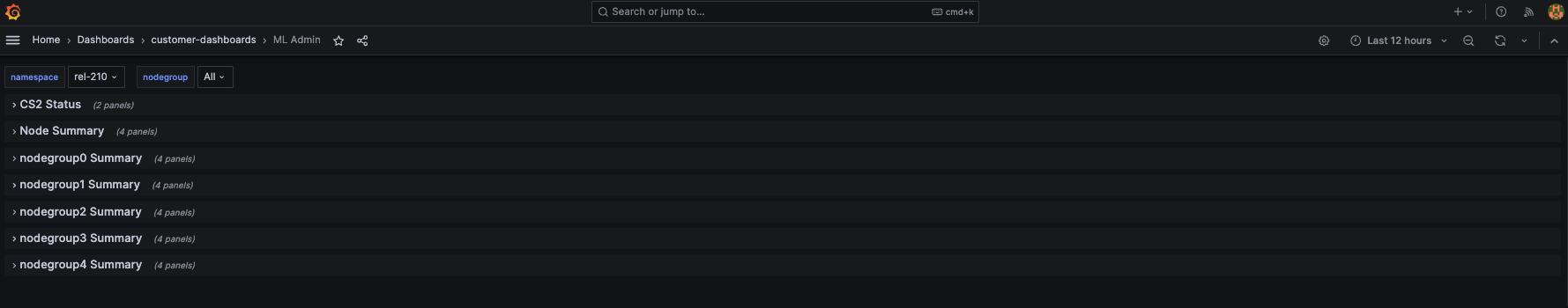

ML Admin Dashboard#

The ML Admin dashboard shows the overall state of the cluster. The following figure displays Cerebras’s Wafer-scale ML Admin dashboard:

It includes the following:

CS-2 Status

Overall CS-2 system status and jobs running on the cluster

Node Summary

Overall CPU/Memory/Network bandwidth information on different types of nodes

Individual NodeGroup Summary

Overall CPU/Memory/Network bandwidth information for nodes inside a nodegroup

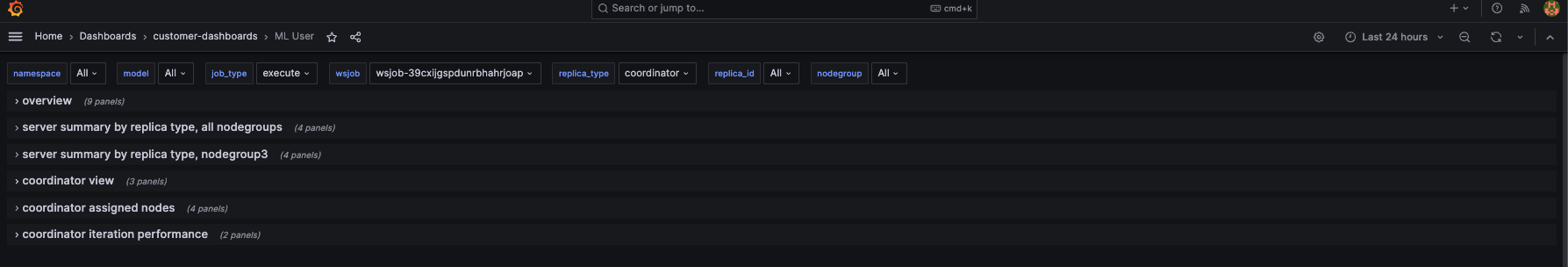

ML User Dashboard#

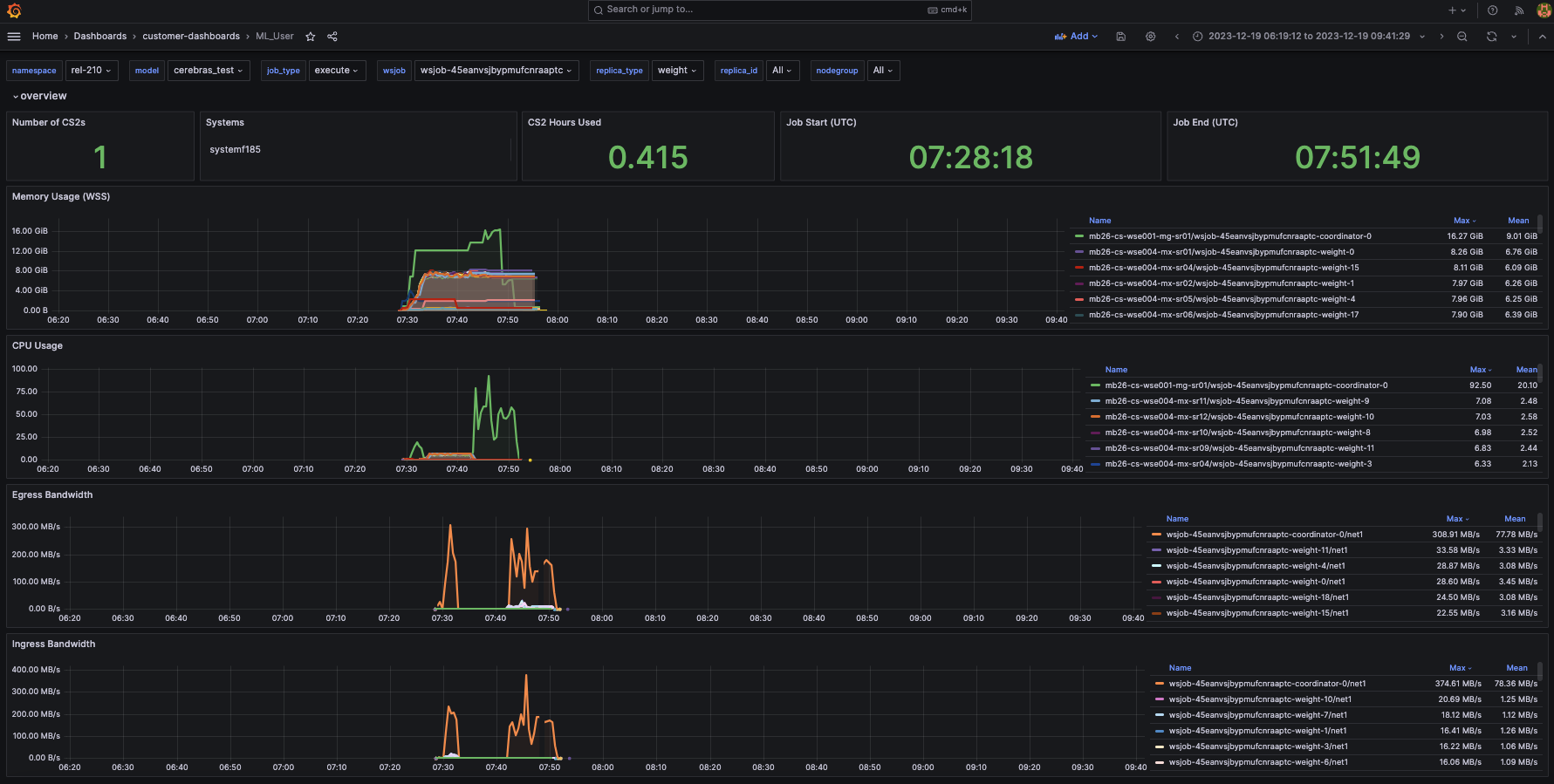

The ML User Dashboard provides job-level metrics, logs, and traces, allowing users to closely monitor the progress and resource utilization of their specific jobs. The following figure displays Cerebras’s ML User dashboard:

The following list describes the various panes in the dashboard:

Overview

Displays the overview of memory/cpu/network bandwidth numbers for all replicas of selected job

Server summary by replica type, all nodegroups

Displays summary CPU/Memory/Network bandwidth for each replica type in all nodegroups

Server summary by replica type, individual nodegroup

Displays summary CPU/Memory/Network bandwidth for each replica type in a single nodegroup

Replica view

Displays memory/cpu/network bandwidth numbers for each

replica_idof thisreplica_typein each chart.Replica_typerepresents a type of service process for a given job. It can be one of these types: weight, command, activation, broadcastreduce, chief, worker, coordinator.Replica_idcorresponds to the specific replica for a job and a replica typeAssigned nodes

Displays physical nodes statuses that are assigned to the chosen

replica_typeandreplica_idMemX performance

Shows iteration-based performance, iteration time, cross-iteration time, and backward iteration time

There are various filters users can select:

wsjob

Indicates the ID of the weight-streaming run, which is used to select between different runs on a particular system

replica_type

Allows selecting between the activation, weight, and all server metrics

nodegroup

Selects a nodegroup to show server summaries

Other fields available that are useful are the model, job_type, and the replica_id.

Prerequisites#

You have access to the user node in the Cerebras Wafer-Scale cluster. Contact Cerebras Support for any system configuration issues.

You can run a port-forwarding SSH session through the user node from your machine with this command:

$ ssh -L 8443:grafana.<cluster-name>.<domain>.com:443 myUser@usernodeNote

This command uses the local port

8443to forward the traffic. You can choose any unoccupied port on your machine.

How to get access?#

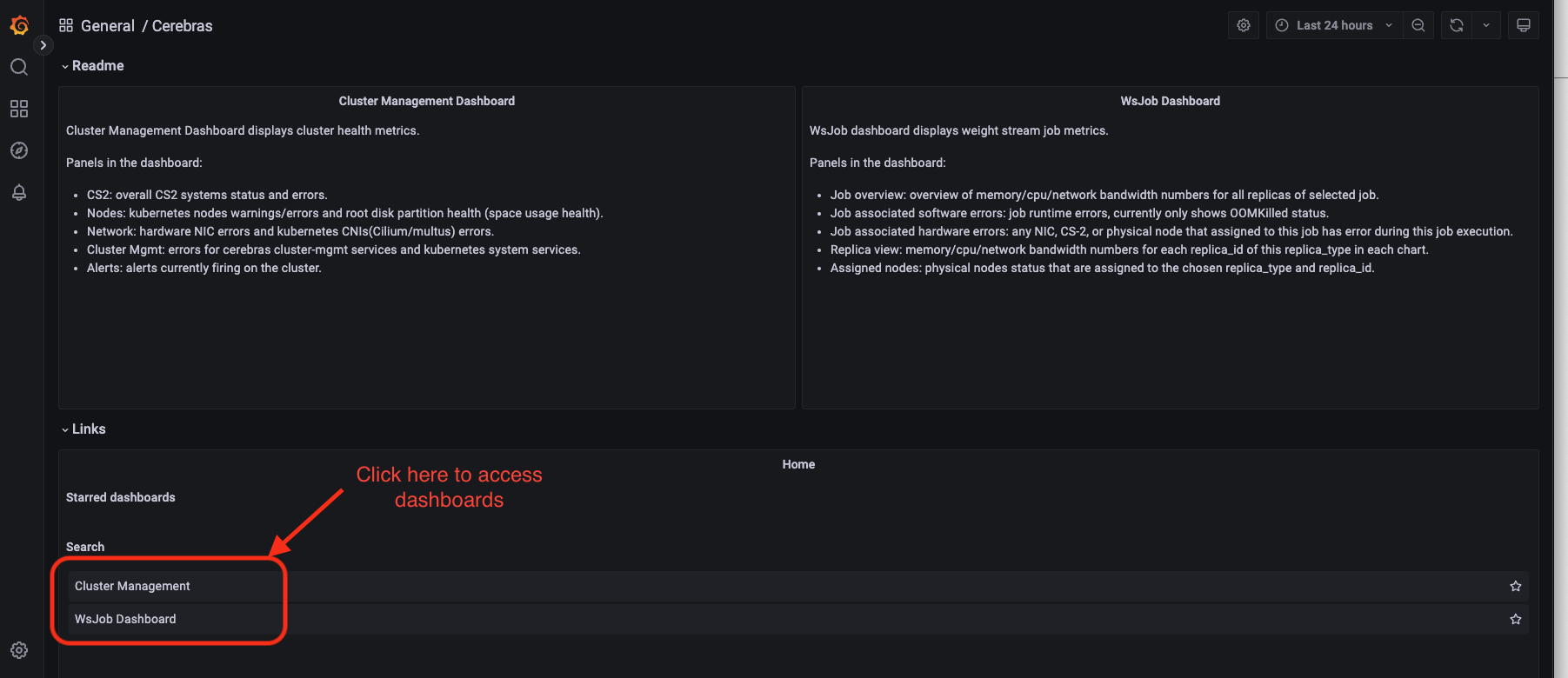

Links are accessible from the General/Cerebras tab. The following figure displays a Cerebras dashboard:

Steps to get access#

1. Ask your system administrator to set up the Grafana database. URLs come in the format:

grafana.CLUSTER-NAME.DOMAIN.com For example: grafana.mb-systemf102.cerebras.com

2. Get authentication credentials for Grafana (username and password) from your system administrator.

3. Add the Grafana TLS certificate to your browser keychain. The Grafana TLS certificate is located at /opt/cerebras/certs/grafana_tls.crt on the user node. This certificate is copied during user node installation process. Download this certificate to your local machine and add this certificate to your browser keychain.

On a Chrome browser on Mac OS:

Go to Preferences -> Privacy and Security -> Security -> Manage Certificates

Add

grafana-tls.crtinto System keychain certificates. Make sure to set Always Trust when using this certificateNext, edit your local machine’s

/etc/hostsfile to point the IP of the user node to Grafana: <USERNODE_IP> grafana.<cluster-name>.<domain>.comFinally, navigate in your browser to the URL

https://grafana.<cluster-name>.<domain>.comto access the Grafana Dashboards

Viewing performance metrics using the ML User dashboard#

You can view cluster iteration-performance metrics by tracking update times across the weight servers.

Our current dashboard implementation shows iteration time, forward-iteration time, backward-iteration time, cross-iteration time, and input starvation.

Iteration time

Indicates the time from the end of iteration “i-1” on the weight servers to the end of iteration “i” on the weight servers.

Forward-iteration time

Indicates the time spent in iteration “i” during the forward pass.

Backward-iteration time

Indicates the time spent in iteration “i” during the backward pass.

Cross-iteration time

Indicates the time between the last gradient received of an iteration to the first weight sent. A high value indicates an optimizer performance bottleneck.

Input starvation

Indicates the time spent waiting on the framework to receive activations.

These statistics are shown in the following image and can be used to identify performance bottlenecks in the training process:

Viewing utilization metrics using the ML User Dashboard#

The following figure shows the overview status for a job, including the list of CS-2s, start and end time, memory/cpu/network usage for different replicas in a job:

The Overview, Server summary by replica, all nodegroups, and Server summary by replica, individual nodegroup display memory/cpu/network bandwidth numbers relevant to a job, with different granularity levels. The Overview show the metrics for all replicas in a job, and systems are used by the job. The two Server summary by replica panes show the metrics in all nodegroups, or an individual nodegroup.

The Replica view metric displays memory/cpu/network bandwidth numbers for each replica_id of this replica_type

in each chart. Replica_type represents a type of service process for a given job. It can be one of these types: weight,

command, activation, broadcastreduce, chief, worker, and coordinator.

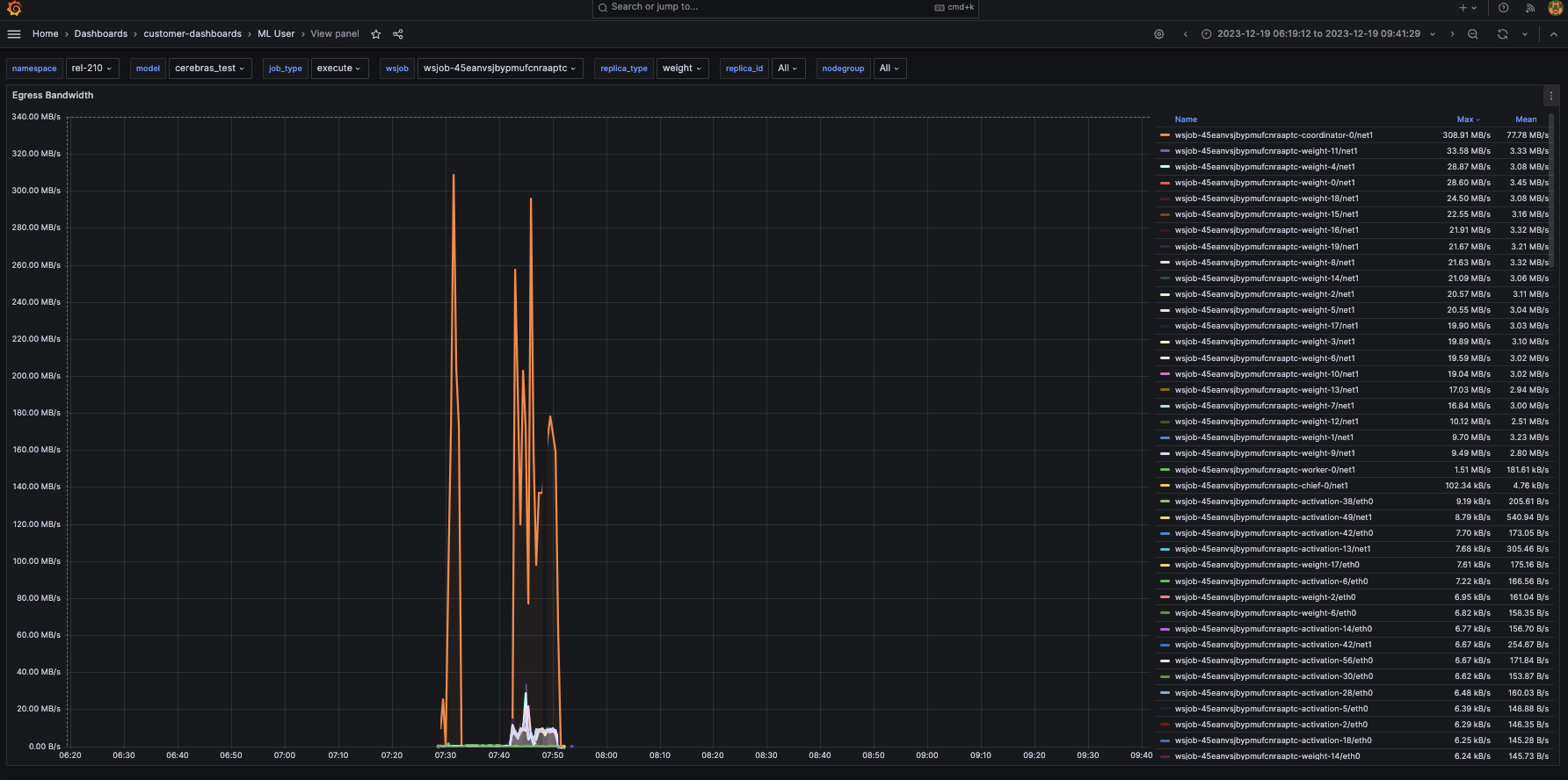

1. Egress bandwidth indicates each supporting server’s maximum and mean network egress speeds. This might be helpful information to monitor whether jobs are network-bound via the transmission speeds of a lagging node.

The following figure shows that weight servers achieve a maximum network transmit speed of ~33 MB/s:

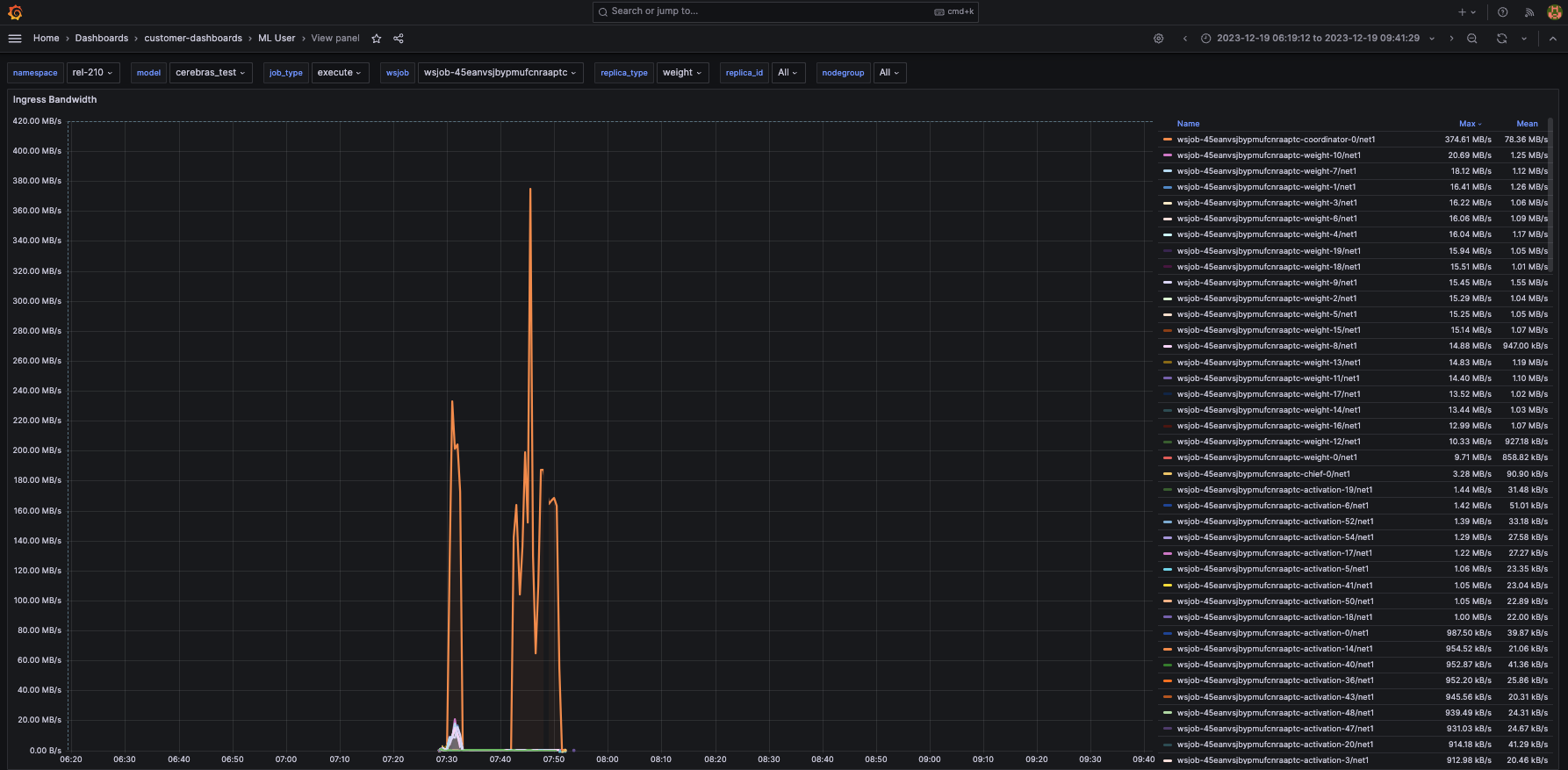

2. Ingress bandwidth denotes the ingress speeds for each supporting server. For example, in this instance, the weight servers have an average ingress speed of around 20 MB/s.

The following figure shows the ingress bandwidth metric:

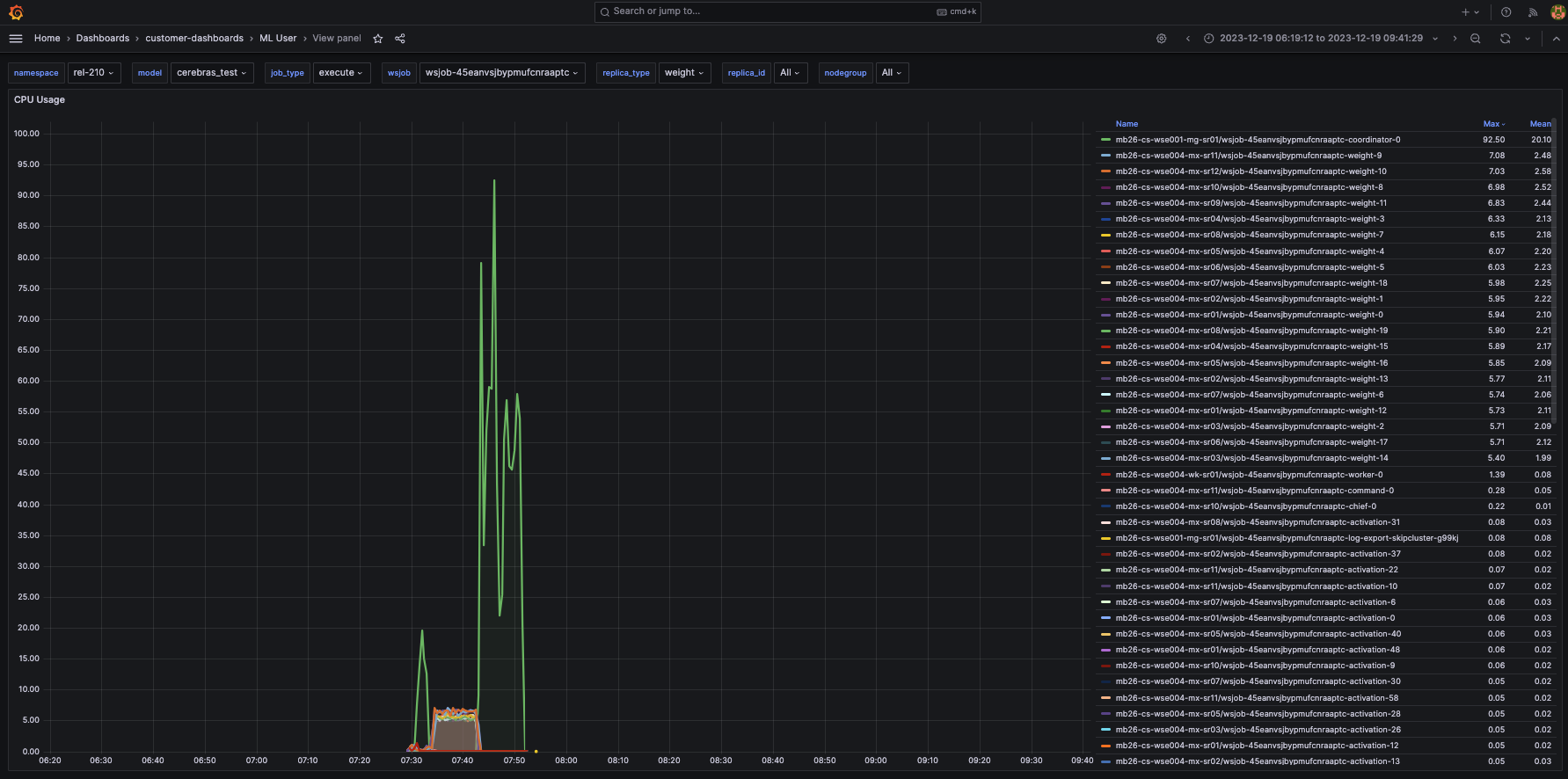

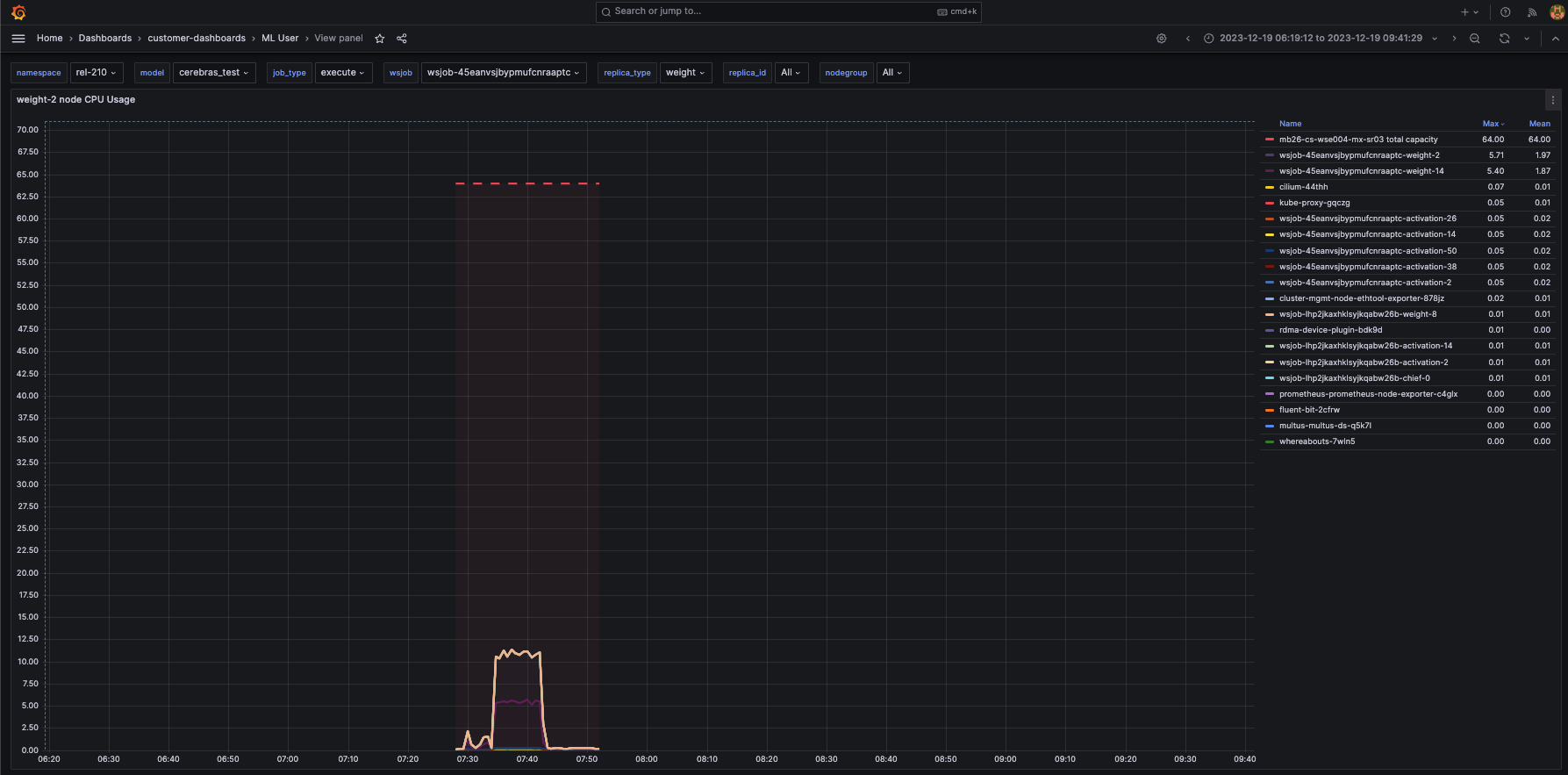

3. CPU usage shows the CPU percentage utilization for each weight-server. In this case, the CPUs are only 5-7% utilized.

The following figure shows the cpu usage metric:

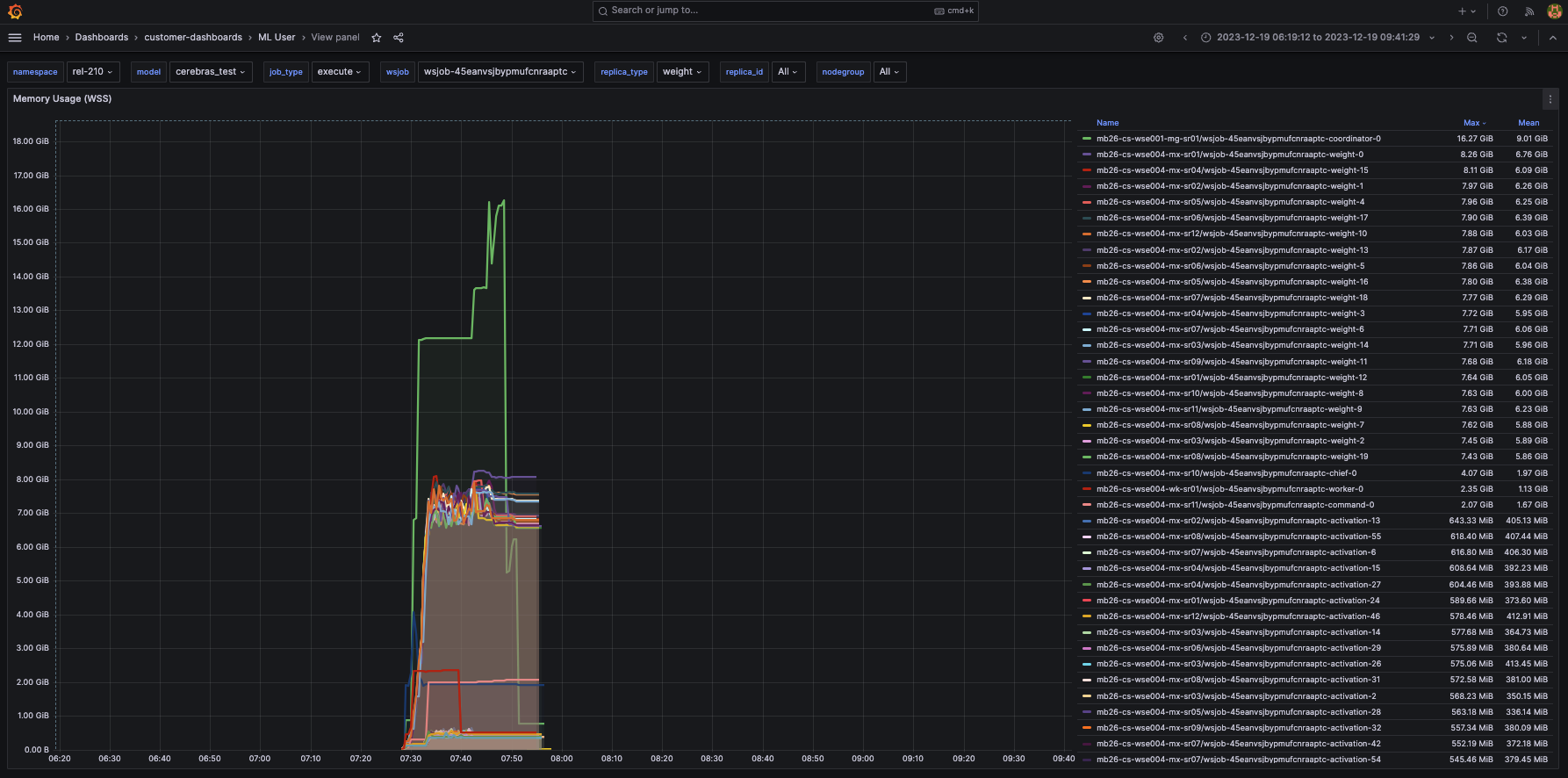

4. Memory usage indicates the maximum and mean amounts of memory each weight server uses over time. This can be useful in debugging whether the weight servers are memory-bound. For more information on memory requirements, visit Resource requirements for parallel training and compilation.

The following figure shows the memory usage metric:

5. You can use the Grafana interface to show individual metrics for a particular node that runs a replica. For example, these are the views for CPU and memory usage for the node that runs weight-2 replica:

The following figure shows the cpu usage per node metric:

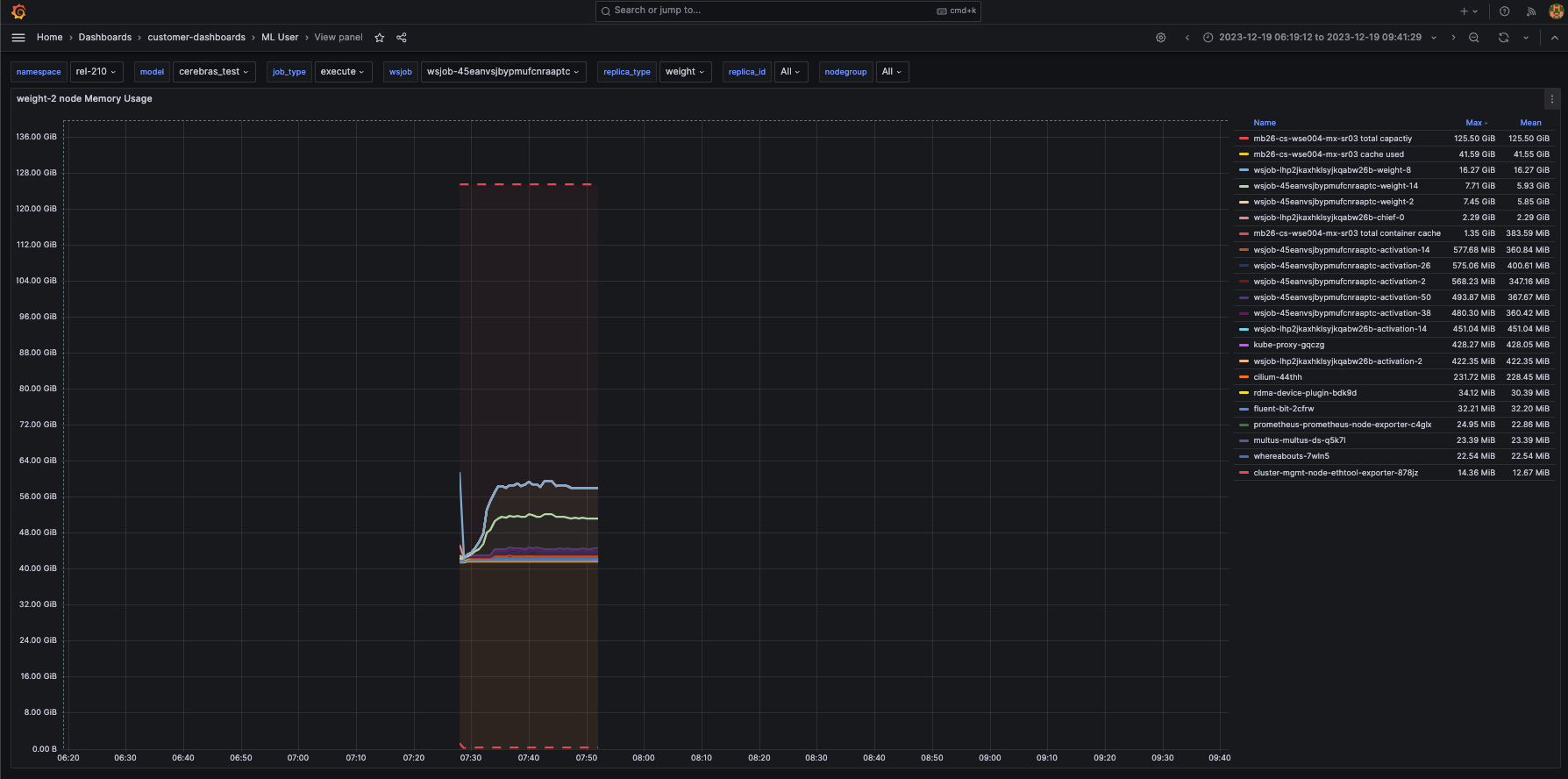

The following figure shows the memory usage per node metric: