CosineAnnealingLR

CosineAnnealingLR¶

class modelzoo.common.pytorch.optim.lr_scheduler.CosineAnnealingLR (optimizer: torch.optim.optimizer.Optimizer, initial_learning_rate: float, T_max: int, eta_min: float, disable_lr_steps_reset: bool = False)

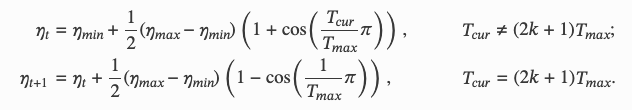

Set the learning rate of each parameter group using a cosine annealing schedule, where 𝜂𝑚𝑎𝑥 is set to the initial lr and 𝑇𝑐𝑢𝑟 is the number of steps since the last restart in SGDR:

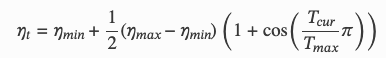

Notice that because the schedule is defined recursively, the learning rate can be simultaneously modified outside this scheduler by other operators. If the learning rate is set solely by this scheduler, the learning rate at each step becomes:

It has been proposed in SGDR: Stochastic Gradient Descent with Warm Restarts. Note that this only implements the cosine annealing part of SGDR, and not the restarts.

This class is similar to https://pytorch.org/docs/stable/generated/torch.optim.lr_scheduler.CosineAnnealingLR.html#torch.optim.lr_scheduler.CosineAnnealingLR

- Parameters:

optimizer – The optimizer to schedule

initial_learning_rate – The initial learning rate

T_max – Maximum number of iterations

eta_min – Minimum learning rate