Incremental Compile

On This Page

Incremental Compile#

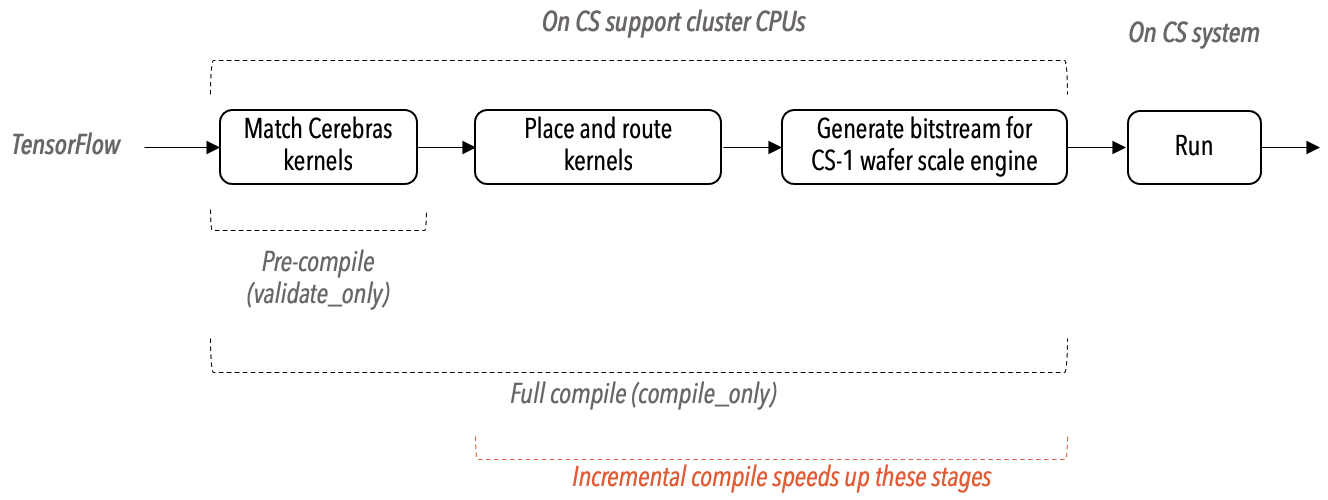

When a TensorFlow program containing CerebrasEstimator is compiled using the Cerebras Graph Compiler (CGC), the CGC executes a sequence of transformations on this program. During this process, the compiler performs a series of optimizations that will translate the neural network described in the TensorFlow to an executable bitstream for the CS system.

When you are fine-tuning your neural network model you will likely compile the same neural network model multiple times, each with a different set of hyperparameters. After you compile your model the first time, the incremental compile feature of CGC will automatically speed up the subsequent compile runs of your model by reusing, wherever possible, the optimizations already performed. See the following high-level CGC flow diagram.

Scope of incremental compilation#

The CGC performs incremental compilation when the only change is in the value of the hyperparameters and no change in the structure and the resources required for the model. When any of the supported hyperparameters change in value after a compile run, the immediately following compile run will perform incremental compile, finishing quicker.

Currently the following hyperparameters are supported for incremental compile:

Dropout rate

Static learning rate

Learning rate (LR) schedule changes within the same schedule

Static loss scaling factors

Dynamic loss scaling (setting max/min scales, num_steps)

Constant values in:

Optimizers (for example,

beta1,beta2,epsilon)Normalization layers (for example,

alpha_fwd,alpha_bwd)

Global gradient clipping (changing

max_gradient_normor the value inclip_by_value)

Note

The incremental compile will be automatically activated by CGC when it detects the changes in the above-supported hyperparameters.